Bridging Perceptual and Analytic Dynamics via Function Alignment

Yuxuan Wu (MBZUAI) Gus Xia (MBZUAI, NYU Shanghai)

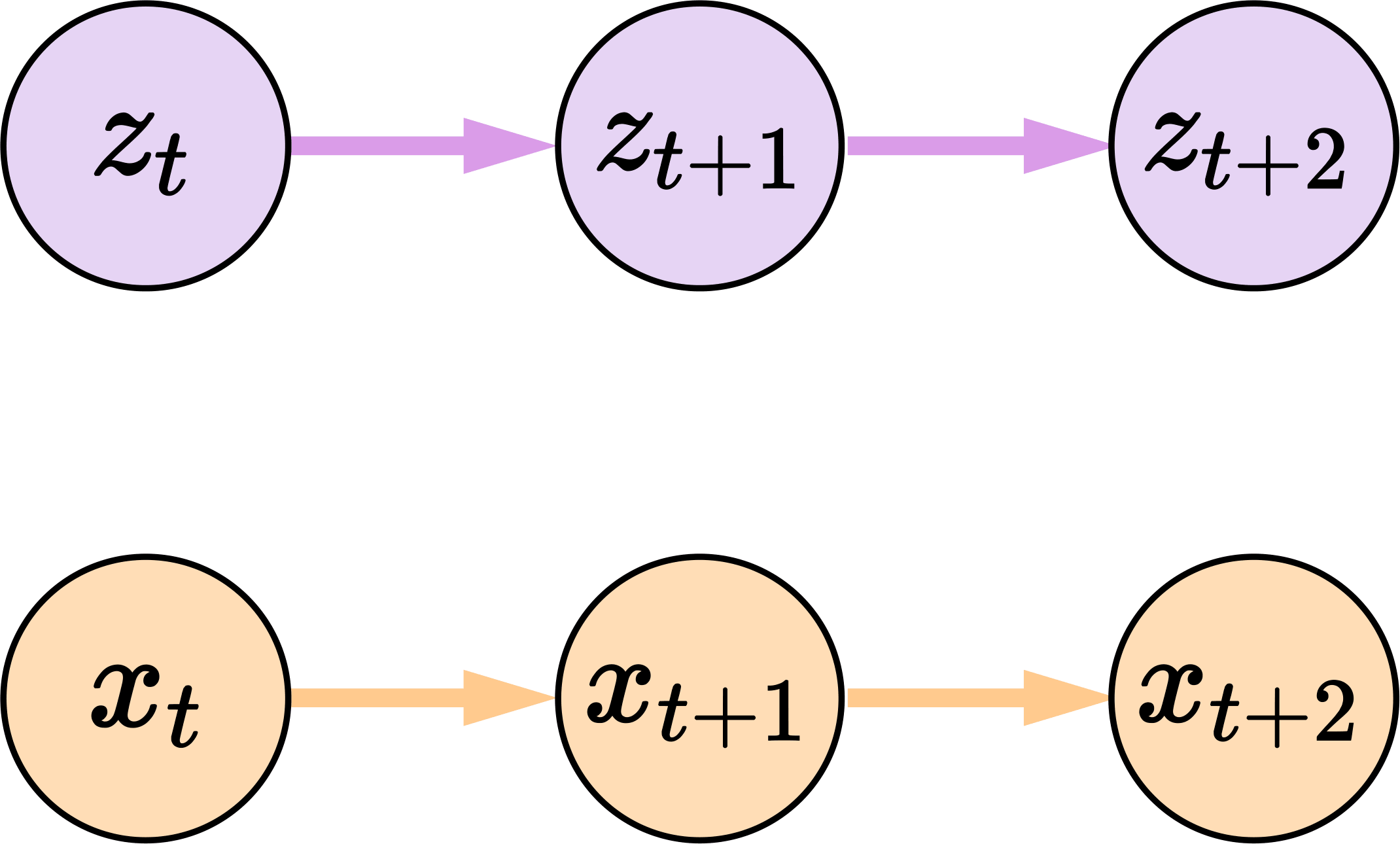

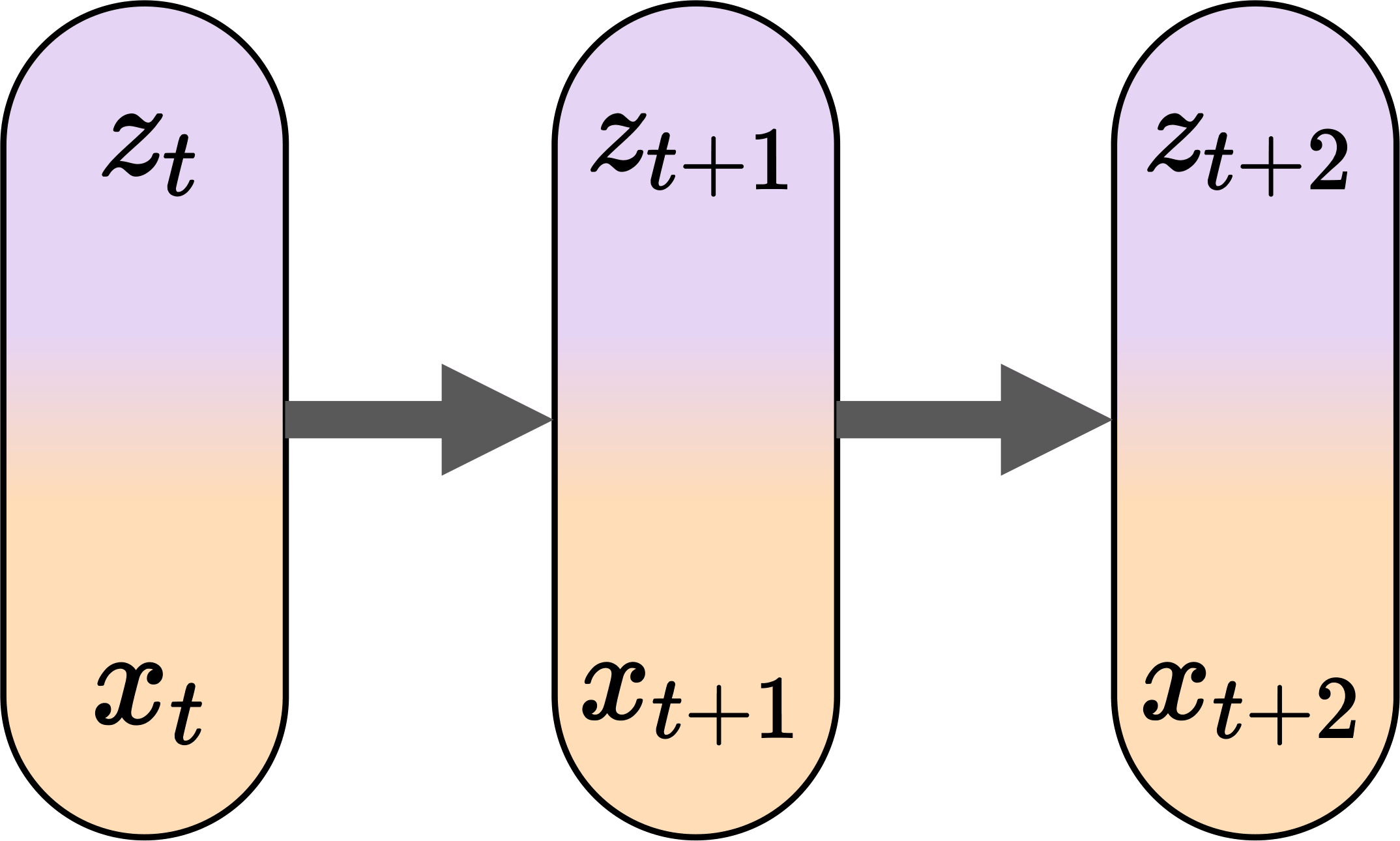

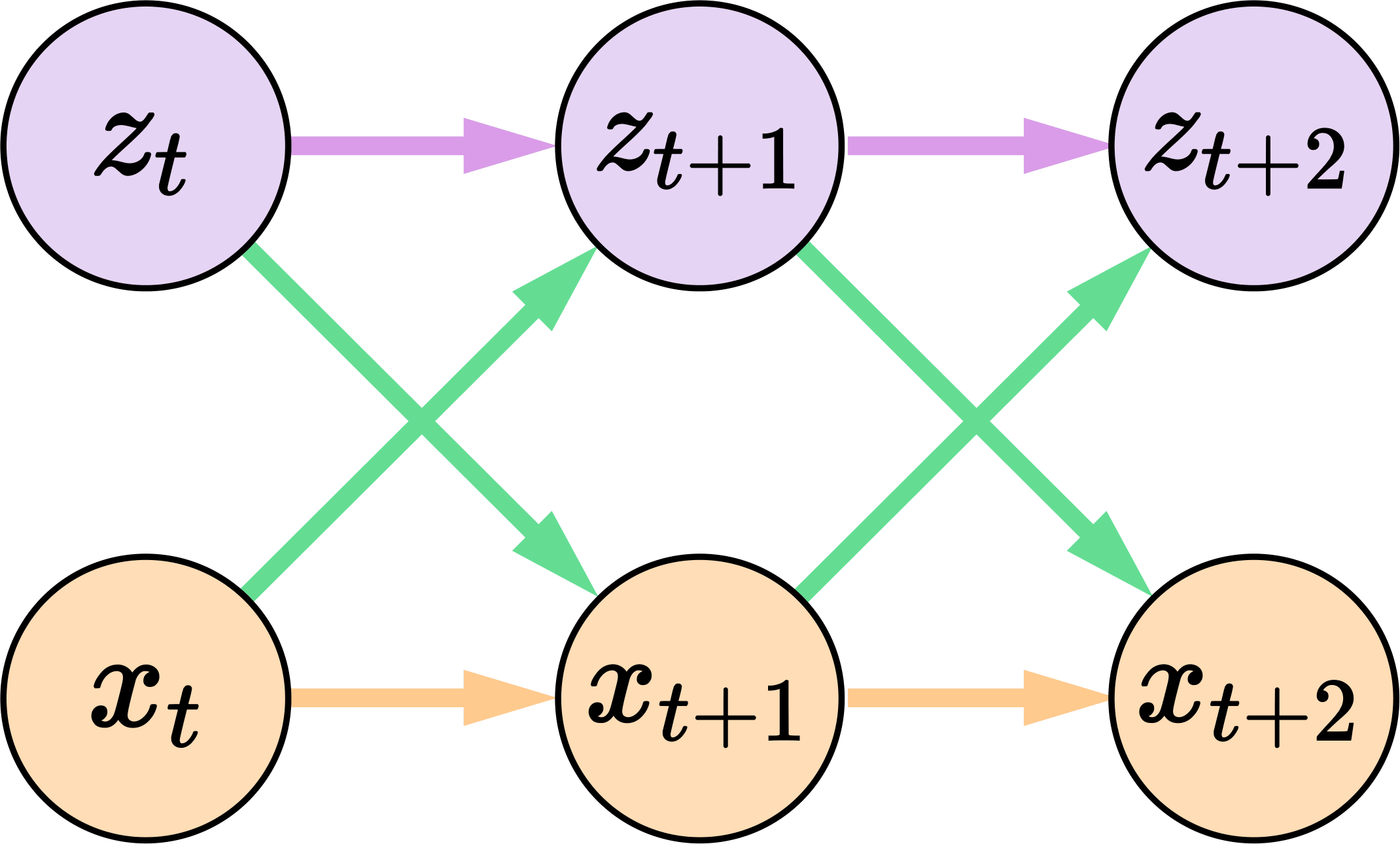

Human modeling of complex processes often involves multiple representations that capture different aspects of the same underlying reality. While recent approaches mostly unify such representations into a single predictive model, this unification could obscure the distinct functional roles associated with each representation. Inspired by the function alignment framework proposed recently, we study an alternative paradigm in which heterogeneous predictive dynamics are preserved and coupled through bidirectional alignment at the level of functions. We consider a setting with two representations of the same process paired in time: a high-dimensional perceptual sequence and a compact analytic state sequence, each governed by its own autoregressive dynamics. Rather than collapsing them into a unified model, we align their predictive functions using lightweight adapter modules that allow each dynamics to incorporate signals from the other during rollout. We conduct experiments on two physical prediction tasks exhibiting different functional roles of the two dynamic processes, and demonstrate that function alignment significantly improves long-horizon stability during joint rollout in both perceptual and analytic domains. Together, our results provide a concrete instantiation of function alignment between perceptual and analytic dynamics, along with empirical evidence that preserving heterogeneous predictive dynamics can be critical for stable sequential prediction.

Methodology

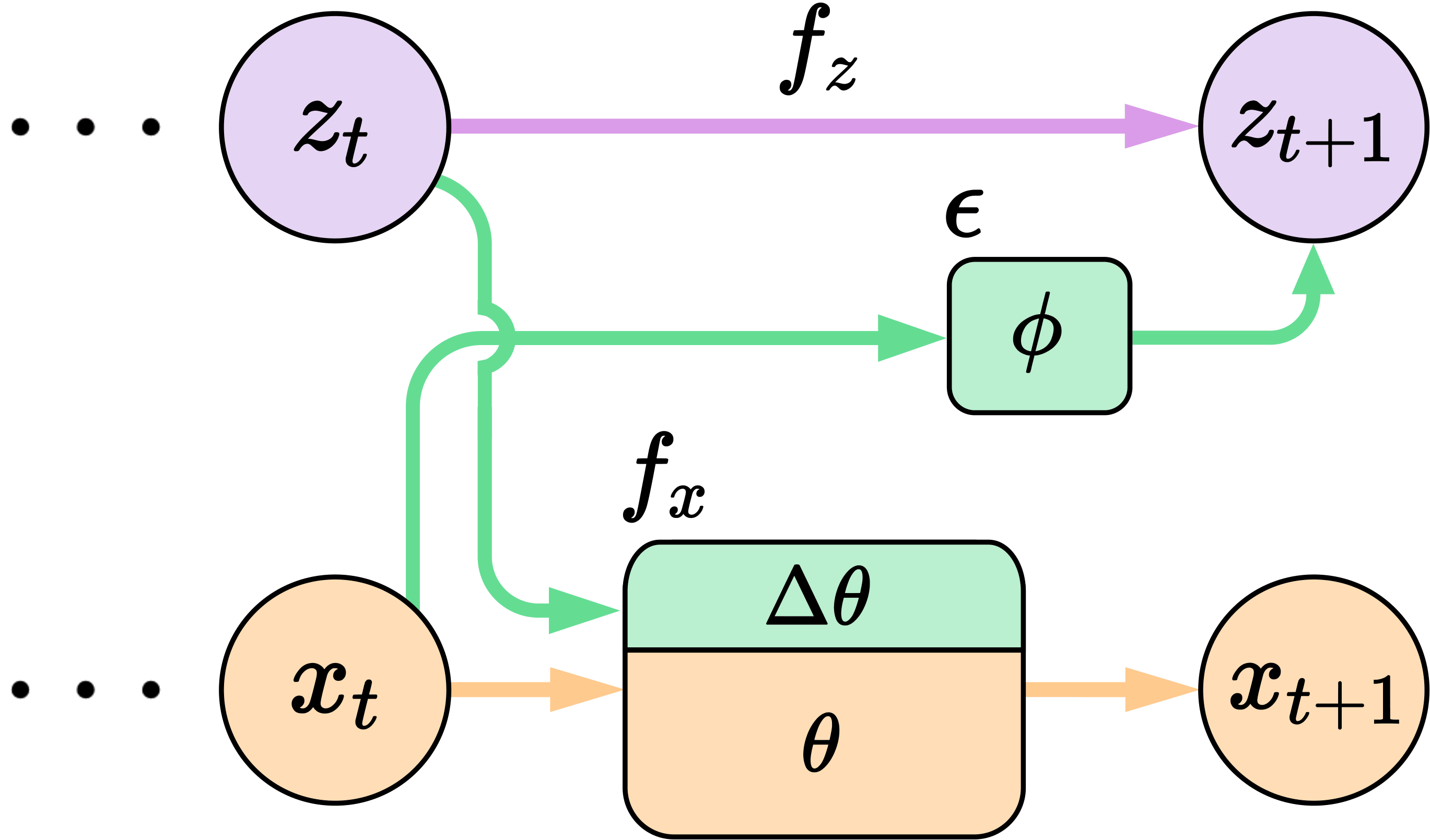

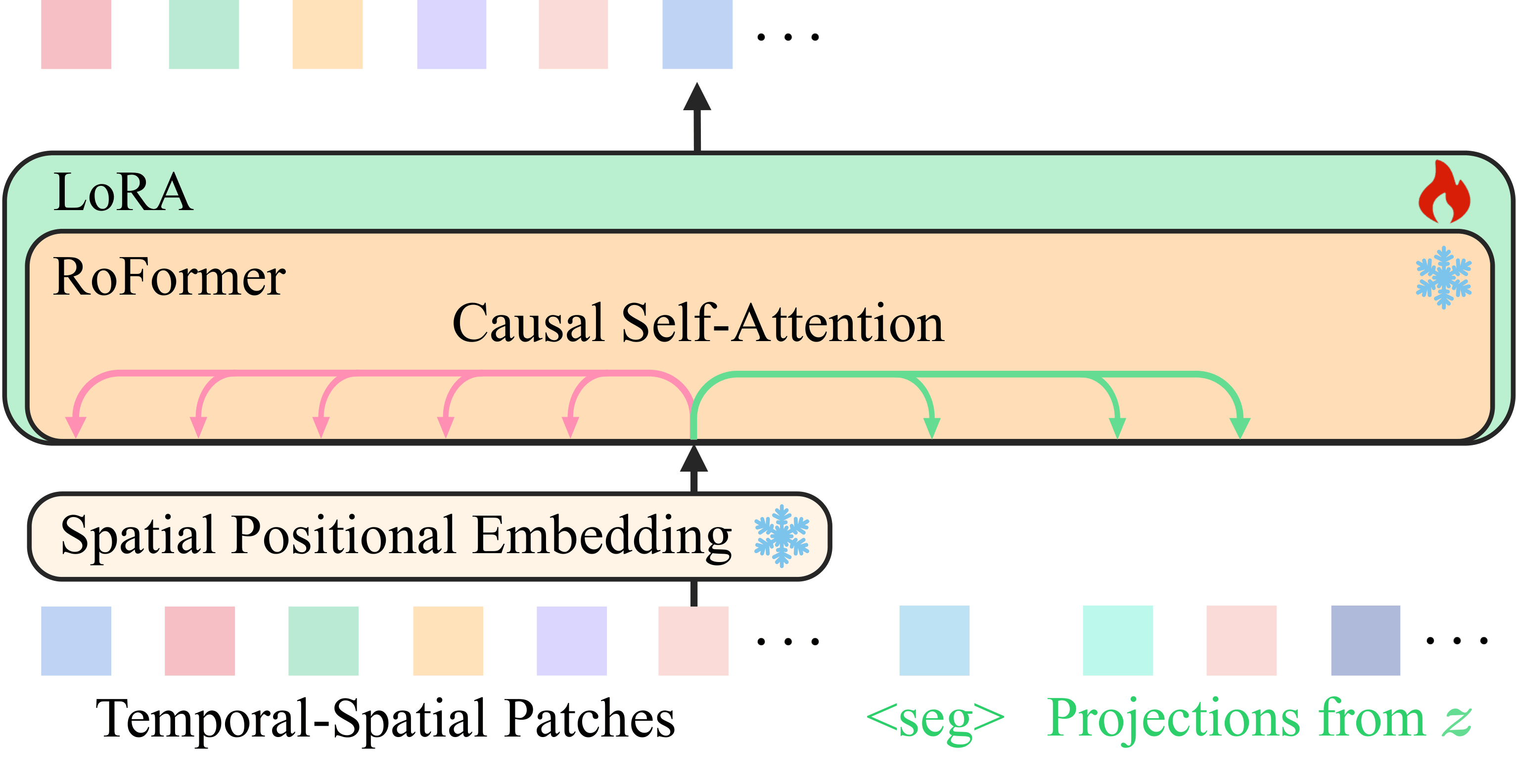

Function alignment couples a perceptual dynamics model and an analytic dynamics model without flattening them into one shared state transition. Analytic history conditions the perceptual rollout, while perceptual evidence supplies a corrective signal back into the analytic transition.

For the broader conceptual framing behind function alignment, see the position paper Function Alignment: A New Theory of Mind and Intelligence, Part I: Foundations.

| Function Alignment | Analytic-to-Perceptual Adaptation |

|---|---|

Function alignment introduces analytic-to-perceptual conditioning and perceptual-to-analytic corrective updates while preserving the base predictive functions. |

Projected analytic tokens are added as causal context and optimized with LoRA rather than rewriting the pretrained perceptual dynamics wholesale. |

Function alignment is a plug-and-play mechanism: it preserves the original dynamics and adds alignment modules around them. In general, it can be used both for co-generation and for conditional generation, but this page focuses only on co-generation results.

Experiment 1: Wind-Affected Bouncing Ball

Dataset Setting

| Description | Dataset Demo |

|---|---|

| A ball moves in 3D under gravity, collisions, and a latent wind field. The analytic state keeps only position and velocity, so the analytic dynamics miss the wind information visible in the perceptual stream. Here, perceptual dynamics are a VideoGPT that captures visual wind cues, while analytic dynamics are a compact rule-based kinematic model. We test if Function Alignment can combine their advantages to achieve accurate long-horizon co-generation. |

Solid ball: ground-truth trajectory; Dimmed ball: analytic kinematic rollout without wind effects (only for visualization). |

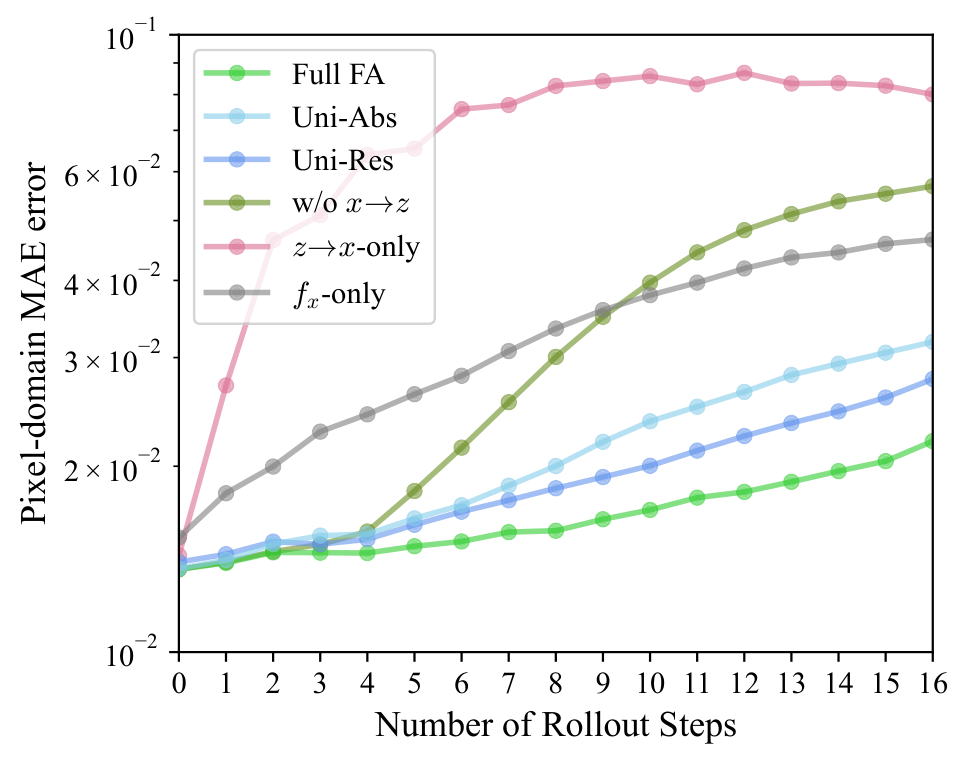

x Domain: Perceptual Prediction

| Quantitative | Qualitative |

|---|---|

Rollout error in the perceptual domain under co-generation. |

Top row: Ground truth. Bottom row from left to right: Full FA, Unified, w/o x→z, and f_x only. The pink square indicates the predicted frames based on 3 given starting frames. |

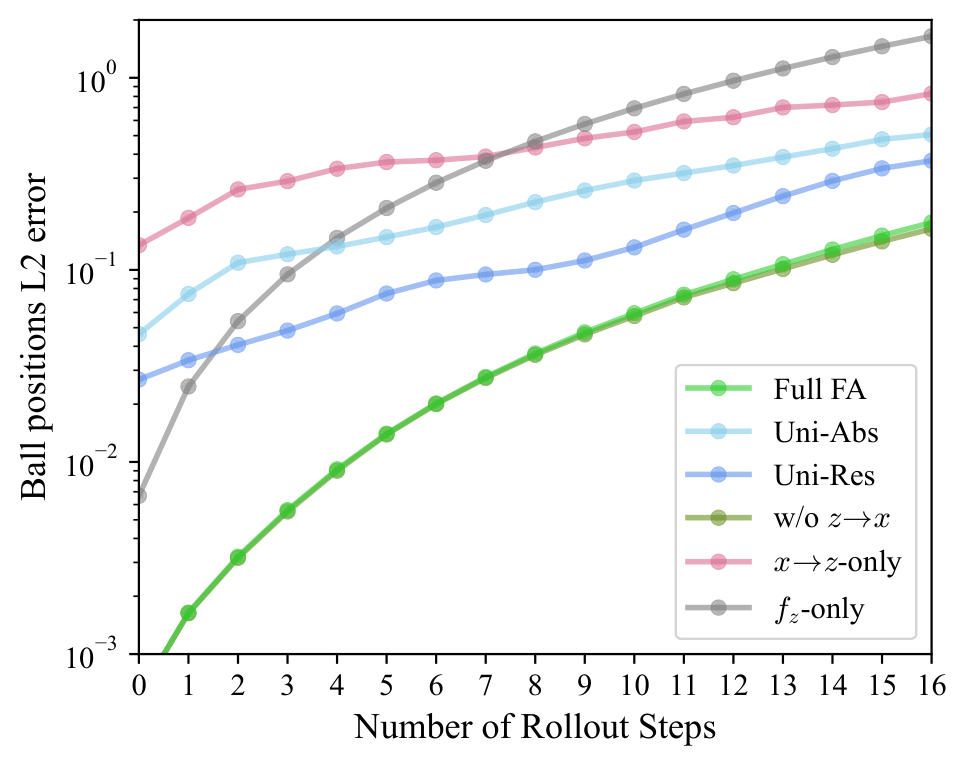

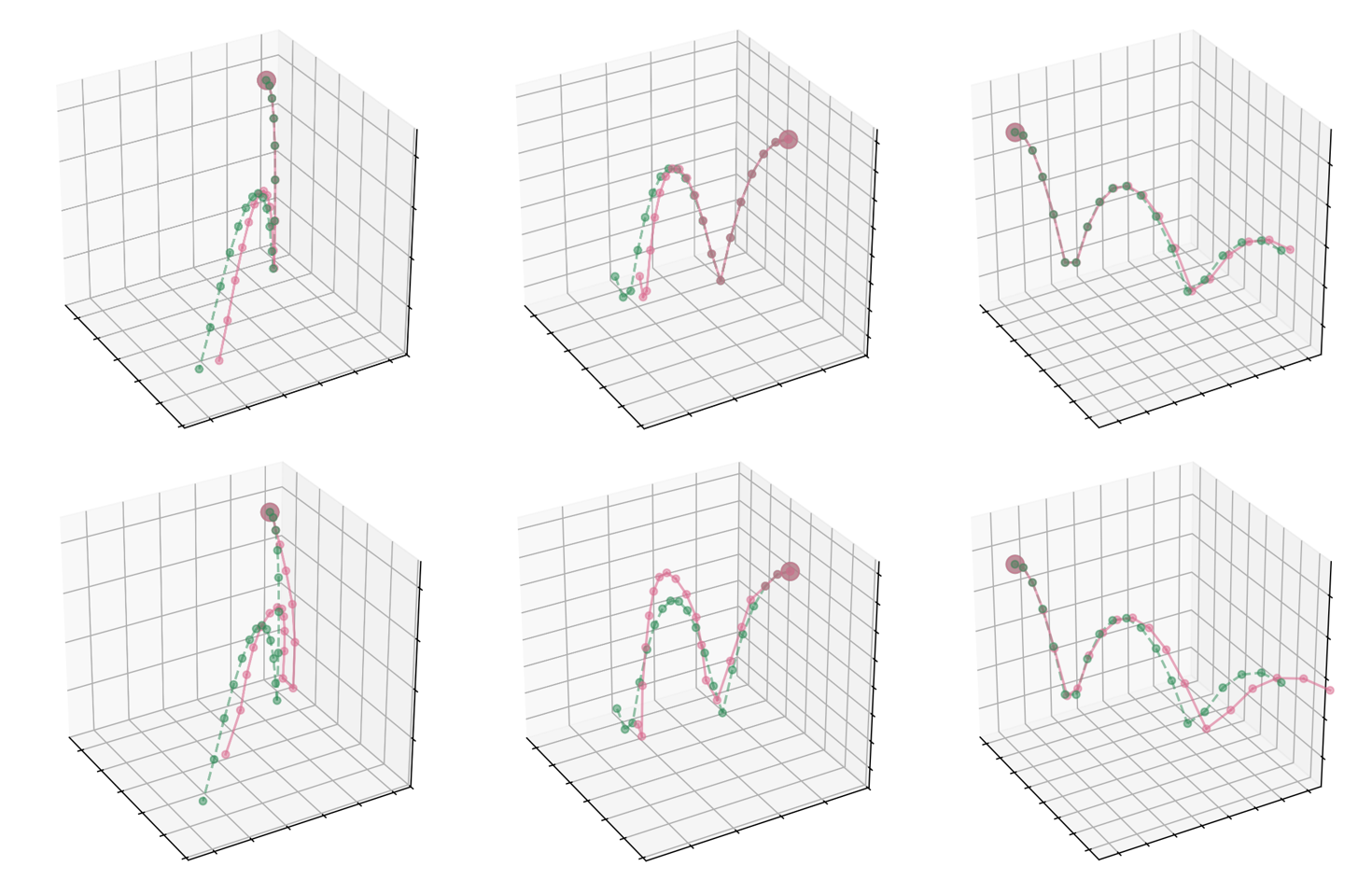

z Domain: Analytic Prediction

| Quantitative | Qualitative |

|---|---|

Rollout error in the analytic state space under co-generation. |

Predicted trajectories in 3D position space. Top: Full FA (pink) vs. ground truth (green). Bottom: unified dynamics (pink) vs. ground truth (green). Larger point: shared initial condition. |

Experiment 2: Double Pendulum

Dataset Setting

| Description | Dataset Demo |

|---|---|

| The double pendulum environment pairs rendered video frames with analytic states containing joint angles and angular velocities. The analytic dynamics use Euler integration, so the state model is compact and physically meaningful but numerically approximate in a chaotic regime. Here, perceptual dynamics are a VideoGPT that models directly the visual observations, while analytic dynamics are still a compact rule-based model. |

Solid pendulum: ground-truth motion; dimmed pendulum: Euler-integrated analytic rollout (only for visualization). |

x Domain: Perceptual Prediction

| Quantitative | Qualitative |

|---|---|

Rollout error in the perceptual domain under co-generation. |

Top row: Ground truth. Bottom row from left to right: Full FA, Unified, w/o x→z, and f_x only. The pink square indicates the predicted frames based on 1 given starting frame. |

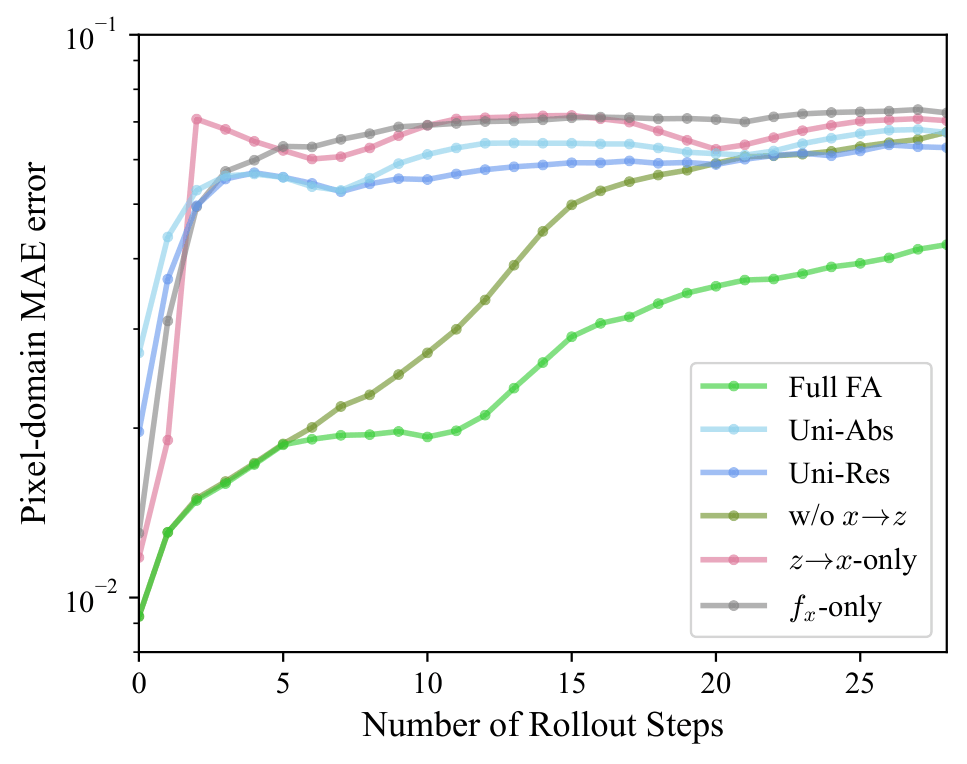

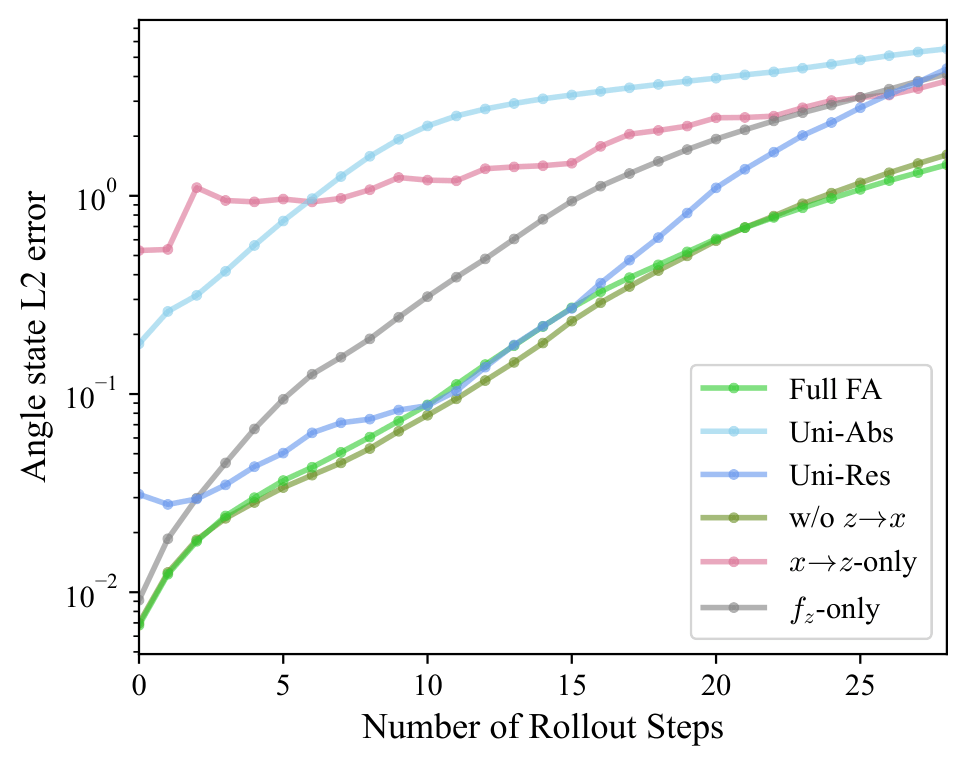

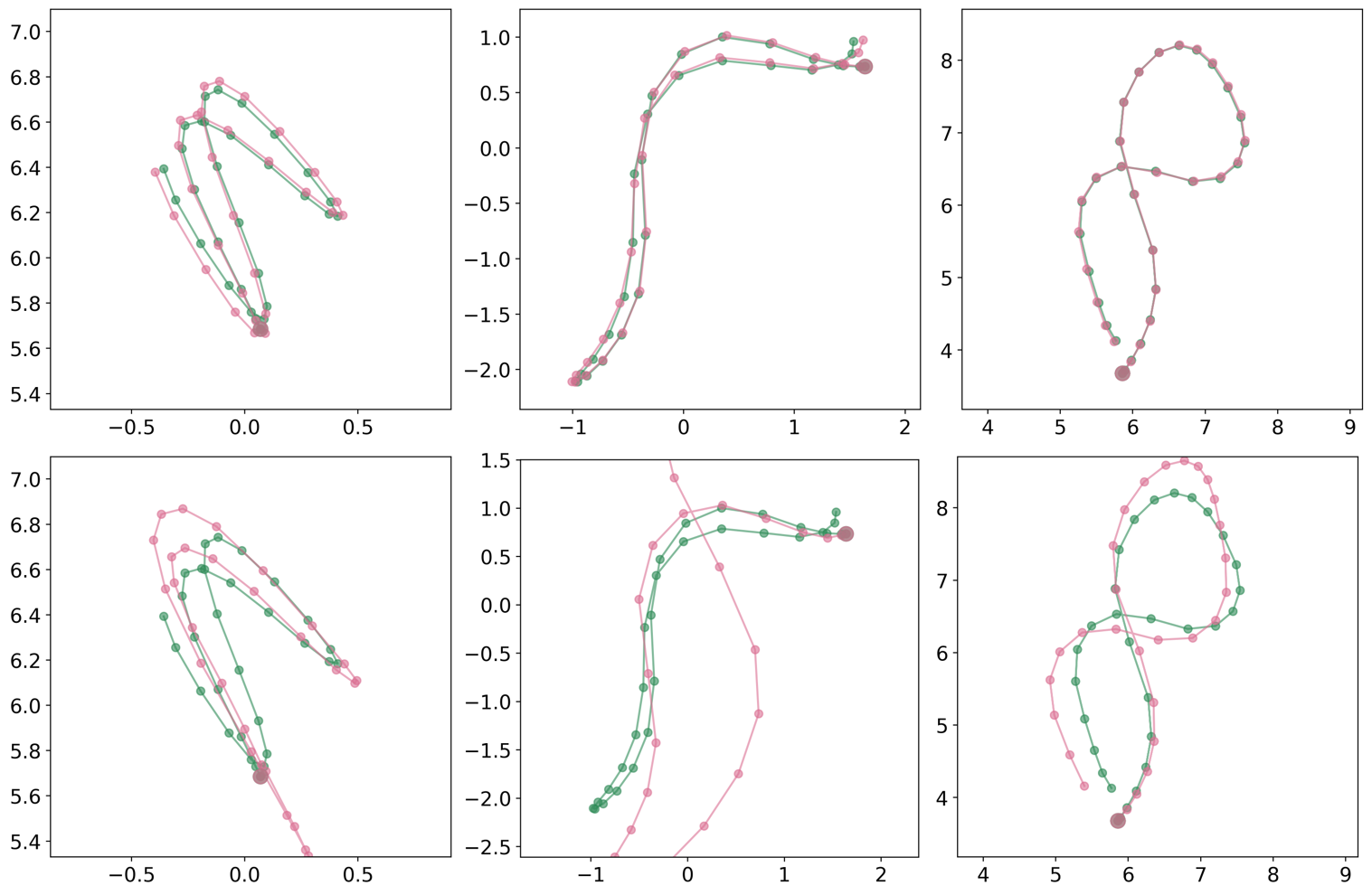

z Domain: Analytic Prediction

| Quantitative | Qualitative |

|---|---|

Rollout error in the analytic state space under co-generation. |

Predicted trajectories in joint-angle space. Top: Full FA (pink) vs. ground truth (green). Bottom: unified dynamics (pink) vs. ground truth (green). Larger point: shared initial condition. |

Citation

@inproceedings{wu2026bridging,

title = {Bridging Perceptual and Analytic Dynamics via Function Alignment},

author = {Wu, Yuxuan and Xia, Gus},

booktitle = {ICLR 2026 ReAlign Workshop},

year = {2026},

url = {https://openreview.net/forum?id=TuuyJZrDm5}

}